Companies are constantly evolving

IT systems continue to develop at breakneck speed. Despite all progress, this also means that the transformation is never over, but rather remains a permanent work in progress. Process efficiencies in particular must be constantly readjusted and optimized. This essentially involves three main dimensions: The business ecosystem, processes, and systems. All three elements evolve at very different speeds: The business ecosystem adopts changes quickly. Processes follow it, but often cannot keep up with the required changes. Systems are even slower to respond due to the time required for design and implementation.

This means that processes and application systems contain an excess of legacy elements.

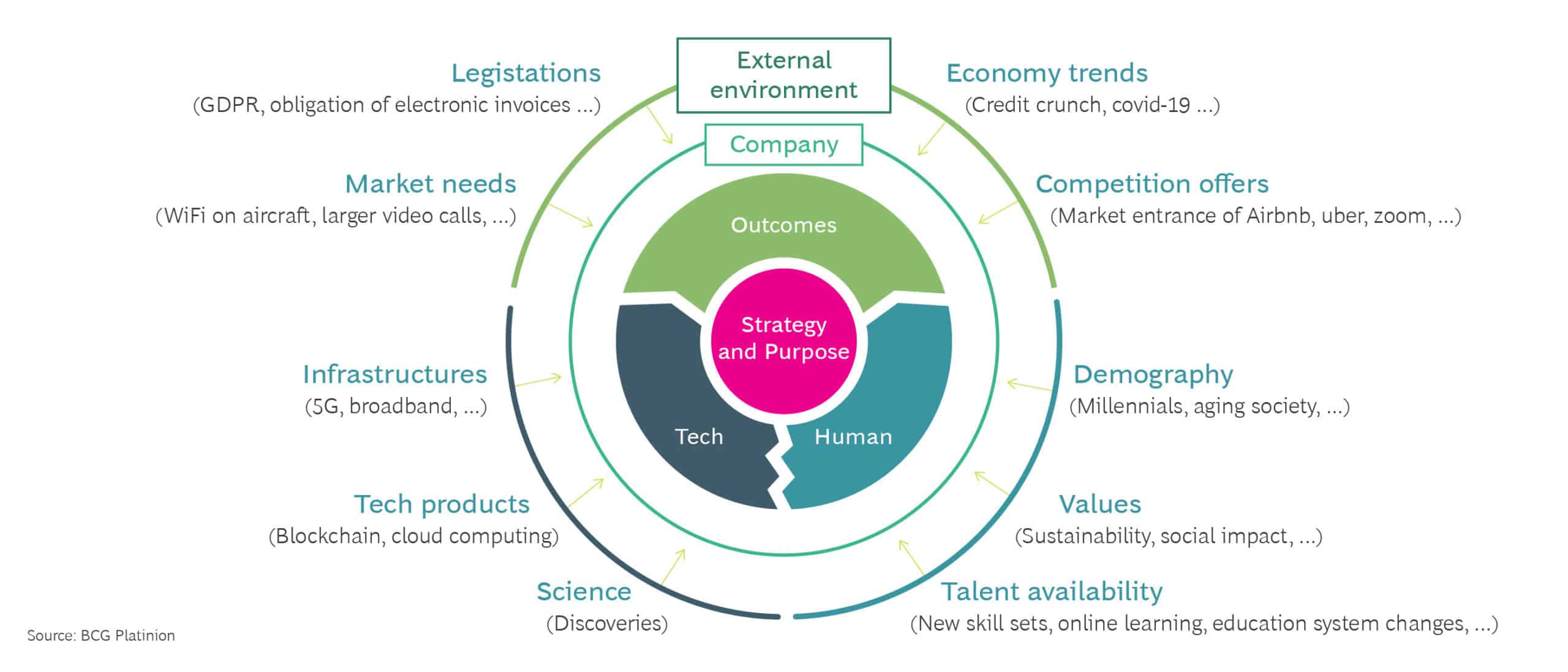

External environment changes require to adapt continuously

They are no longer up to date or may even be superfluous, thereby having a negative impact on the company’s overall efficiency.

Greenfield and brownfield transformations

To manage the resulting transformation needs, the most suitable approach must be identified. IT managers can choose a greenfield or a brownfield approach, which means either a completely new implementation or a gradual system conversion.

The greenfield transformation represents a complete restart of the company’s own processes and workflows, which is often not desirable. It is often complicated by the existing IT structure or legal requirements. In such cases, a brownfield approach is recommended. This involves upgrading systems, integrating current systems into new ones, or implementing new features to support process evolution.

So how do changes take place?

The purpose of the transformation is to simplify processes and better adapt them to the needs of organizations by removing legacy elements. However, the brownfield approach in particular runs the risk of creating costly, overly complicated processes by intertwining different systems in cumbersome ways.

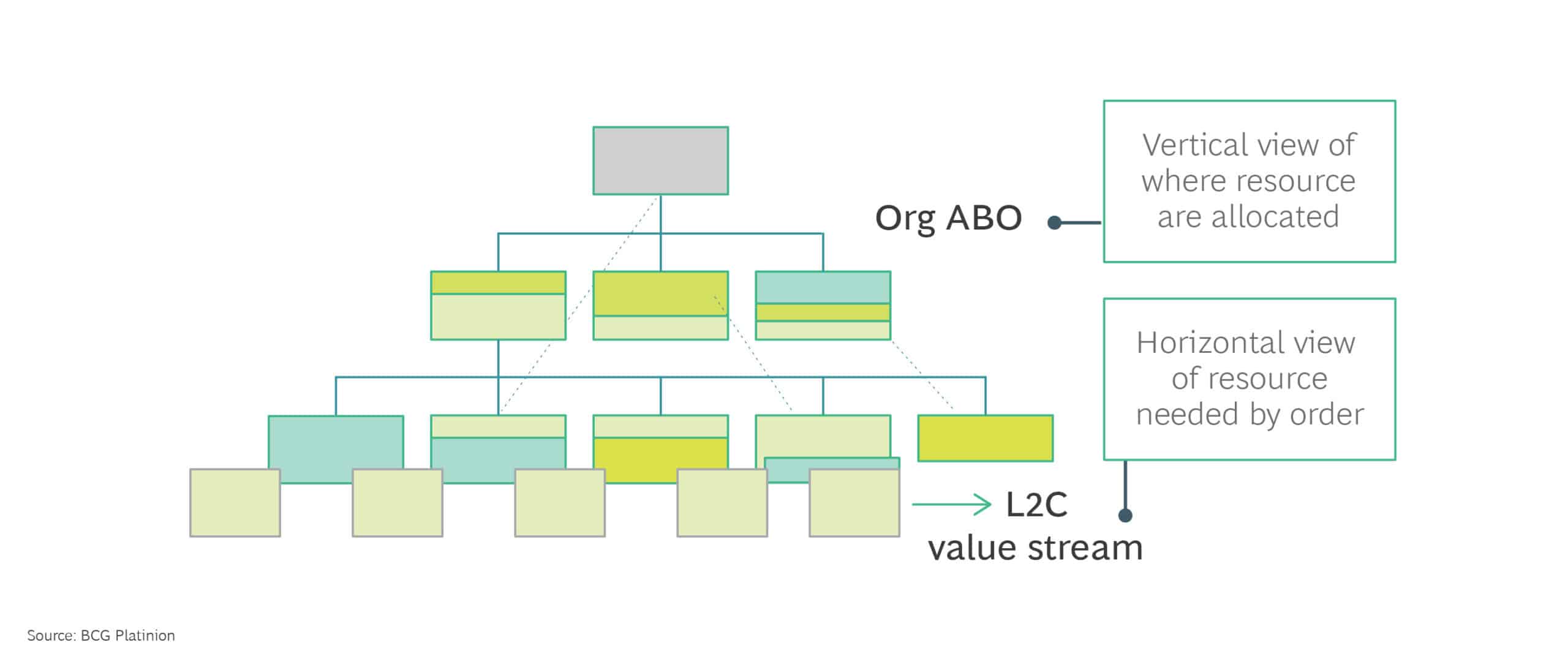

ABO Analysis: creates new level of transparency on what your resources are doing and how they perform

The activity-based view is complementary to value stream mapping

3 steps / 4 weeks analysis, supported by digital platform

3 steps process

These issues are typically addressed by the process owners, who analyze the documentation and interview the stakeholders involved. From this, they design future processes by simplifying and standardizing the individual components.

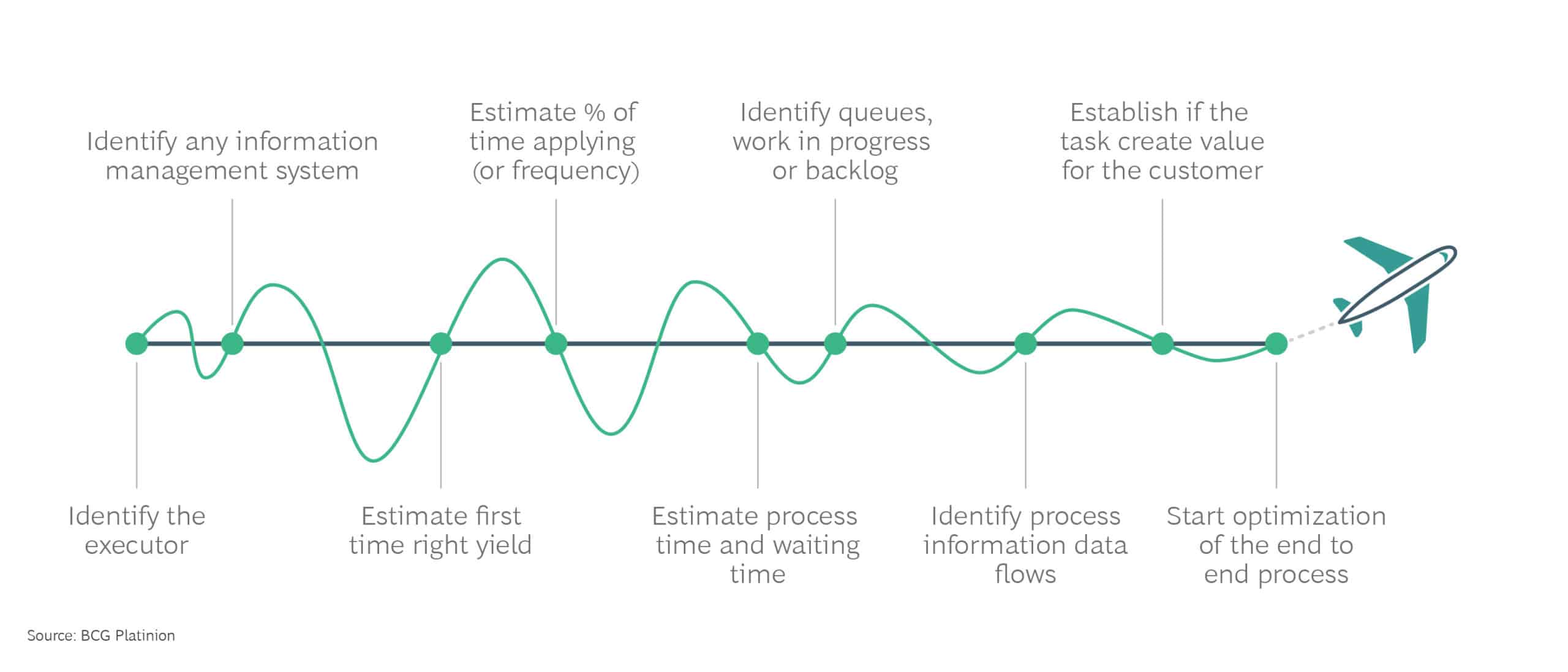

The most commonly used methodology is the so-called Value Stream Mapping (VSM), the mantra of process management. It is not limited to pure process mapping, but rather provides an opportunity to methodically identify all areas for process improvement.

VSM is more than process mapping. It’s a journey that clarifies view of running business processes

Today, automated software tools, commonly known as Process Mining Systems or Execution Management Systems, can strongly support the analysis and synthesis activities of Value Stream Mapping and the overall transformation.

They capture the evolution of a given process throughout its lifecycle and highlight any changes, whether intended or derived. Analysts can thus get a broad overview without sacrificing the level of detail.

The discussed systems do not replace the work of humans, who remain firmly in charge of analysis and process optimization. Instead, they support them by providing a comprehensive end-to-end view of the process ecosystem.

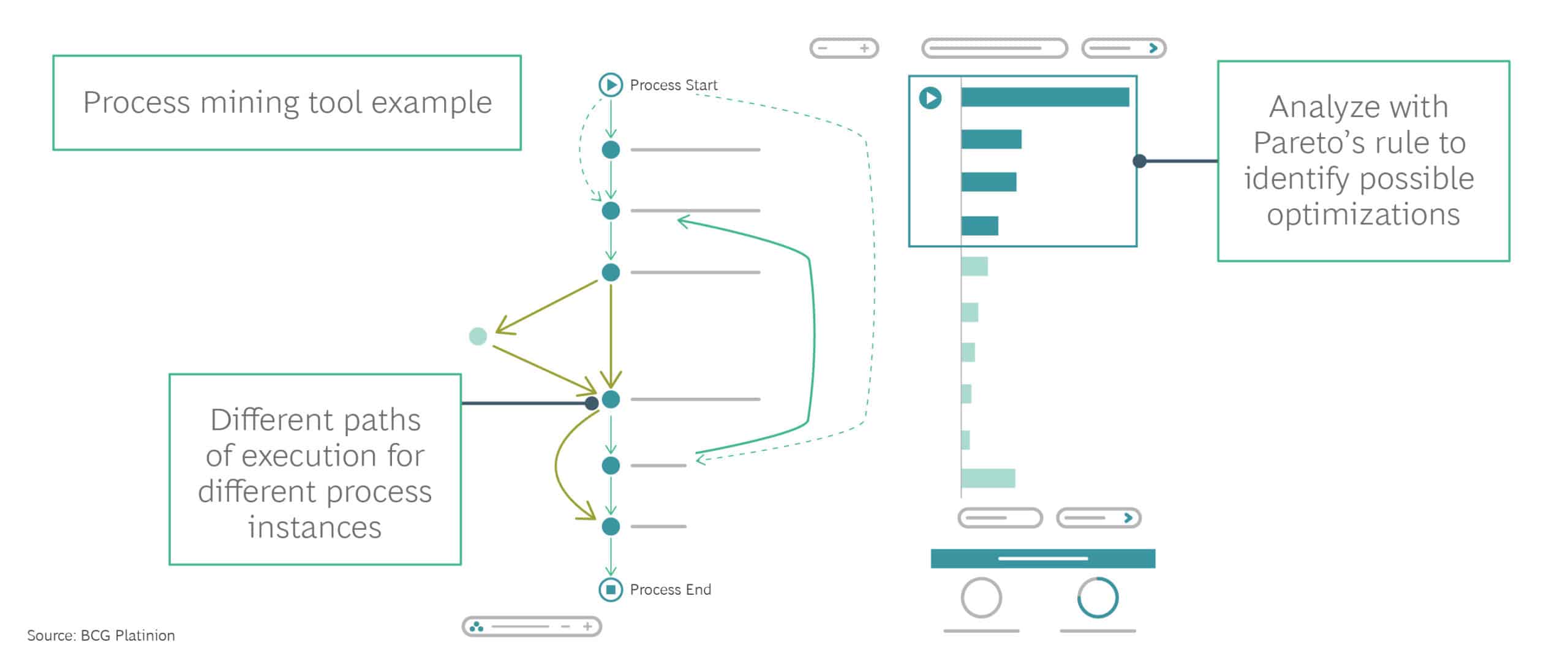

So, how does process mining help?

Process mining helps in understanding the number of resources and activities required for each process and in assessing whether its expansion is in line with the “happy path” of an error-free workflow. In addition, analysts gain valuable insights into how current processes can be optimized, either by modifying systems with surgical precision or by implementing automation.

In addition to that, the solutions can harvest logging information from a variety of systems and identify links between transactional elements on different systems. This represents the first step in carrying out a specific analysis of process instances.

Process mining solutions can also actively interact with systems, typically by accessing transactions and transaction data or by inserting themselves into the integration layer in a way that is usually transparent to the system itself. Thereby, they can identify errors and, once the root causes are identified, fix them during process execution.

Once the system has been built, it is possible to visually analyze the performance of the various processes and consider how they can be optimized further. From this perspective, the process mining system represents an asset that facilitates transformation by making the existing processes visible and transparent.

- In digital transformation processes: Process mining supports both the analysis and the test phases. It provides an easy way to analyze process behavior and recognize possible deviations from the happy path. Additionally, it offers a quick and reliable overview of how a system has behaved over the entire course of its life.

-

In carve-out or post-merger & acquisition integration: The processes in both companies are precisely compared and any deviations from existing plans are recorded. This allows for a better understanding of the as-is, target and transitional situations.

-

During optimization of internal processes: The process mining system is used to effectively identify all possible options for automation and optimization for a given set of existing processes.

In this case, the benefit is distributed across all the analyzed processes as well as the systems that implement them – both original and final ones. This guarantees maximum transparency for all transformations that have taken place.

Conclusion

By applying Artificial Intelligence (AI) algorithms and relational logic, information can be extracted that is essential for optimizing the value stream as well as process performance and transparency. Regardless of the area in which it is used, the investment in process mining pays off: By applying this methodology, inefficiencies in companies can be drastically reduced.

Potential savings range from 20% for relatively simple process flows to more than 80% for complex, multi-layered processes.

About the Authors

Riccardo Palozzi

Principal IT ArchitectMilan, Italy

Riccardo started coding at age of seven, because he wanted to play games and then coded for fifteen years, contributing to many Texas Instrument games and PC based games with some routines. He holds a Master of Computer Science and has a strong track record as developer, architect and program manager with experience in telco, energy, consumer and banking. Driven by curiosity he decided to make a move to IT strategy and joined BCG Platinion in 2017.

Salvatore Cali

BCG Partner & Associate DirectorRome, Italy

Salvatore is an Associate Director & Partner in the Rome office of The Boston Consulting Group (BCG). He is a member of BCG Lean experts network and he is a certified Six Sigma Master Black Belt. He has worked extensively with clients across industries on issues related to lean services, with an engagement focused on lean enablement in support functions, including finance, IT, sales and marketing, effectiveness and efficiency of processes, and process excellence programs. Salvatore also has a relevant experience of more than 14 years in Oil & Gas and Healthcare services, where he held several leadership positions in Engineering, Marketing, Operations and Quality.